[1]J. Somers, “Is ai riding a one-trick pony?”Technology review, vol. 120, no. 6, pp.29–36, 2017.

[2]Yuille, A. L., & Liu, C. (2021). Deep nets: What have they ever done for vision?.International Journal of Computer Vision, 129(3), 781–802.

[3]Almahairi, A., Ballas, N., Cooijmans, T., Zheng, Y., Larochelle, H., & Courville, A.(2016, June). Dynamic capacity networks. InInternational Conference on MachineLearning(pp. 2549–2558).

[4]Ning, X., Li, W., Tang, B., & He, H. (2018). BULDP: biomimetic uncorrelatedlocality discriminant projection for feature extraction in face recognition.IEEETransactions on Image Processing, 27(5), 2575–2586.

[5]Ning, X., Nan, F., Xu, S., Yu, L., & Zhang, L. (2020). Multi-view frontal face imagegeneration: A survey.Concurrency and Computation: Practice and Experience, e6147.

[6]Ning, X., Li, W., & Liu, W. (2017). A fast single image haze removal method basedon human retina property.IEICE TRANSACTIONS on Information and Systems,100(1), 211–214.

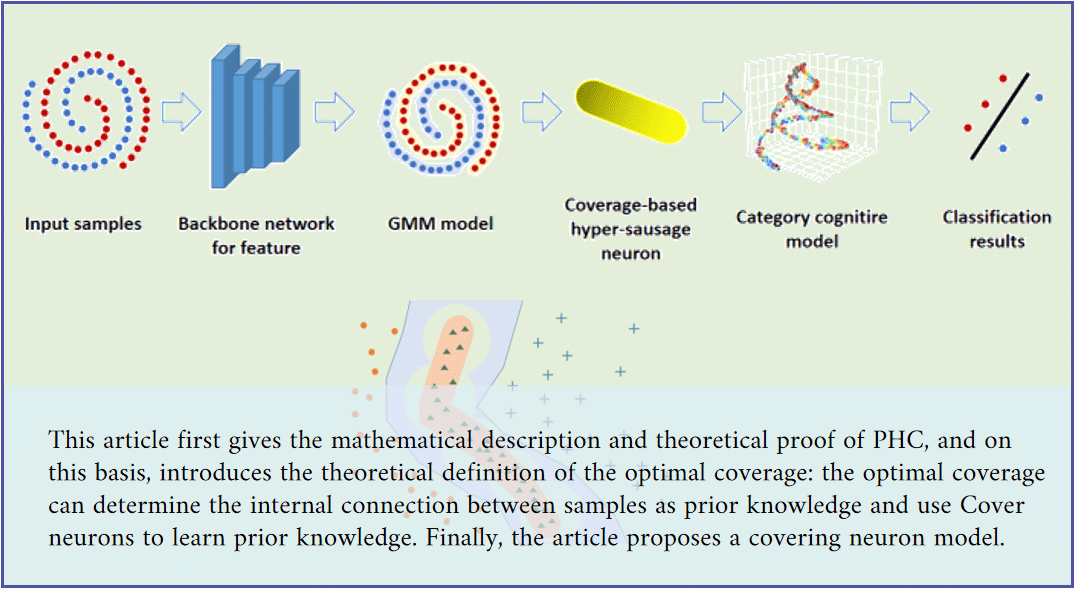

[7]Ning, X., Li, W., & Xu, J. (2018). The principle of homology continuity and geo-metrical covering learning for pattern recognition.International Journal of PatternRecognition and Artificial Intelligence, 32(12), 1850042.

[8]Hu, J., Shen, L., Albanie, S., Sun, G., & Wu, E. (2020). Squeeze-and-Excitation Networks.IEEE Transactions on Pattern Analysis and Machine Intelligence, 42(8),2011–2023.

[9]Woo, S., Park, J., Lee, J. Y., & Kweon, I. S. (2018). Cbam: Convolutional block at-tention module. InProceedings of the European conference on computer vision(ECCV)(pp. 3–19).

[10]Li, X., Wang, W., Hu, X., & Yang, J. (2019). Selective kernel networks. InProceedingsof the IEEE/CVF Conference on Computer Vision and Pattern Recognition(pp. 510–519).

[11]Sabour, S., Frosst, N., & Hinton, G. E. (2017, January). Dynamic Routing BetweenCapsules. InNIPS.

[12]Cheng, X., He, J., He, J., & Xu, H. (2019). Cv-CapsNet: Complex-valued capsulenetwork.IEEE Access, 7, 85492–85499.

[13]Zhu, X., Su, W., Lu, L., Li, B., Wang, X., & Dai, J. (2020). DeformableDETR: Deformable Transformers for End-to-End Object Detection.arXiv preprintarXiv:2010.04159.

[14] Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner,T., ... & Houlsby, N. (2020). An image is worth 16x16 words: Transformers forimage recognition at scale.arXiv preprintarXiv:2010.11929.

[15]Seung, H. S. (1998). Learning continuous attractors in recurrent networks. InAdvances in neural information processing systems(pp. 654–660).

[16]Seung, H. S., & Lee, D. D. (2000). The manifold ways of perception.science,290(5500), 2268–2269.

[17]Shou-Jue, W. (2002). Bionic (topological) pattern recognition-a new model ofpattern recognition theory and its applications.Acta Electronica Sinica, 10.

[18]Shoujue, W., & Jiangliang, L. (2005). Geometrical learning, descriptive geometry,and biomimetic pattern recognition.Neurocomputing, 67, 9–28.

[19]Zhao, X. R., He, Q., & Shi, Z. Z. (2006, May). Hypersurface classifiers ensemblefor high dimensional data sets. InInternational Symposium on Neural Networks(pp.1299–1304). Springer, Berlin, Heidelberg.

[20]Kamgar-Parsi, B., Lawson, W., & Kamgar-Parsi, B. (2011). Toward developmentof a face recognition system for watchlist surveillance.IEEE Transactions on PatternAnalysis and Machine Intelligence, 33(10), 1925–1937.

[21]Muraina, I. D., Alobaedy, M. M., & Ibrahim, H. H. (2017). A framework forpreserving data integrity during mobile device forensic in open source softwareenvironment. InProceedings of the Free and Open Source Software Conference (FOSSC),Muscat, Oman (pp. 22–26).

[22]Hinton, G. E., Sabour, S., & Frosst, N. (2018, February). Matrix capsules with EMrouting. InInternational conference on learning representations.

[23]Schroff, F., Kalenichenko, D., & Philbin, J. (2015). Facenet: A unified embeddingfor face recognition and clustering. InProceedings of the IEEE conference on computervision and pattern recognition(pp. 815–823).

[24]Deng, J., Guo, J., Xue, N., & Zafeiriou, S. (2019). Arcface: Additive angularmargin loss for deep face recognition. InProceedings of the IEEE/CVF Conferenceon Computer Vision and Pattern Recognition(pp. 4690–4699).

[25]Huang, G. B., Mattar, M., Berg, T., & Learned-Miller, E. (2008, October). La-beled faces in the wild: A database forstudying face recognition in unconstrainedenvironments. InWorkshop on faces in’Real-Life’Images: detection, alignment, andrecognition.

[26]Wolf, L., Hassner, T., & Maoz, I. (2011, June). Face recognition in unconstrainedvideos with matched background similarity. InCVPR 2011(pp. 529–534). IEEE.

[27]Taigman, Y., Yang, M., Ranzato, M. A., & Wolf, L. (2014). Deepface: Closing thegap to human-level performance in face verification. InProceedings of the IEEEconference on computer vision and pattern recognition(pp. 1701–1708).

[28]Wen, Y., Zhang, K., Li, Z., & Qiao, Y. (2016, October). A discriminative feature learning approach for deep face recognition. InEuropean conference on computervision(pp. 499–515).

[29]Liu, W., Wen, Y., Yu, Z., Li, M., Raj, B., & Song, L. (2017). Sphereface: Deephypersphere embedding for face recognition. InProceedings of the IEEE conferenceon computer vision and pattern recognition(pp. 212–220).

[30]Wang, H., Wang, Y., Zhou, Z., Ji, X., Gong, D., Zhou, J., ... & Liu, W. (2018).Cosface: Large margin cosine loss for deep face recognition. InProceedings of theIEEE conference on computer vision and pattern recognition(pp. 5265–5274).

[31] Shi, Y., Yu, X., Sohn, K., Chandraker, M., & Jain, A. K. (2020). Towards universalrepresentation learning for deep face recognition. InProceedings of the IEEE/CVFConference on Computer Vision and Pattern Recognition(pp. 6817–6826).

[32]He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep residual learning for imagerecognition. InProceedings of the IEEE conference on computer vision and patternrecognition(pp. 770–778).

[33]Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., & Zagoruyko, S. (2020,August). End-to-end object detection with transformers. InEuropean Conferenceon Computer Vision(pp. 213–229).

[34]Zhao, Y., Zeng, Y., & Qiao, G. (2021). Brain-inspired classical conditioning model.Iscience, 24(1), 101980.

[35]Kosiorek, A. R., Sabour, S., Teh, Y. W., Hinton, G. E., & STAFFORD-TOLLEY, M. J.(2019). Stacked capsule autoencoders.Advances in Neural Information ProcessingSystems, 32.